Network topology is the arrangement of the elements (links, nodes, etc.) of a communication network. Network topology can be used to define or describe the arrangement of various types of telecommunication networks, including command and control radio networks, industrial fieldbusses and computer networks. Network topology is the topological structure of a network and may be depicted physically or logically. It is an application of graph theory wherein communicating devices are modeled as nodes and the connections between the devices are modeled as links or lines between the nodes. Physical topology is the placement of the various components of a network (e.g., device location and cable installation), while logical topology illustrates how flows within a network. Distances between nodes, physical interconnections, transmission rates, or signal types may differ between two different networks, yet their topologies may be identical. A network’s physical topology is a particular concern of the physical layer of the OSI model.

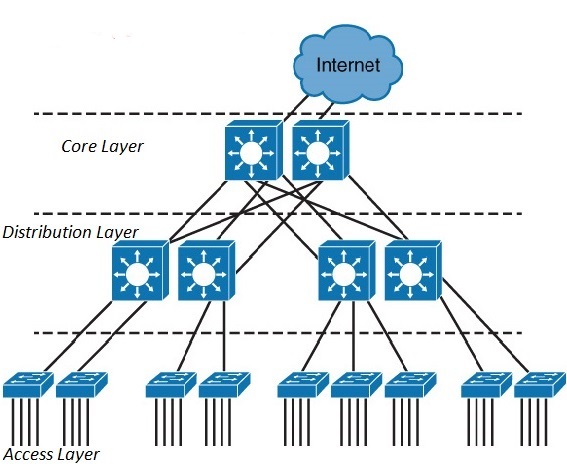

3 Tier

The Hierarchical internetworking model is a three-layer(3 tier) model for network design first proposed by Cisco. A hierarchical network design involves dividing the network into discrete layers. Each layer, or tier, in the hierarchy provides specific functions that define its role within the overall network. It divides enterprise networks into three layers: core, distribution, and access layer.

1) Access layer

End-stations and servers connect to the enterprise at the access layer. Access layer devices are usually commodity switching platforms, and may or may not provide layer 3 switching services. The traditional focus at the access layer is minimizing “cost-per-port”: the amount of investment the enterprise must make for each provisioned Ethernet port. This layer is also called the desktop layer because it focuses on connecting client nodes, such as workstations to the network.

2) Distribution Layer

The distribution layer is the smart layer in the three-layer model. Routing, filtering, and QoS policies are managed at the distribution layer. Distribution layer devices also often manage individual branch-office WAN connections. This layer is also called the Workgroup layer.

3) Core layer

The core network provides high-speed, highly redundant forwarding services to move packets between distribution-layer devices in different regions of the network. Core switches and routers are usually the most powerful, in terms of raw forwarding power, in the enterprise; core network devices manage the highest-speed connections, such as 10 Gigabit Ethernet.

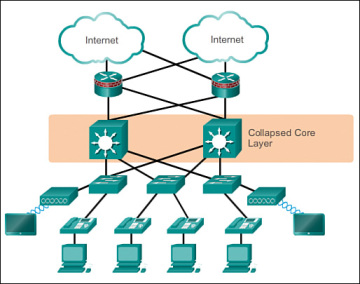

2 Tier

The three-tier hierarchical design maximizes performance, network availability, and the ability to scale the network design. However, many small enterprise networks do not grow significantly larger over time. Therefore, a two-tier hierarchical design where the core and distribution layers are collapsed into one layer is often more practical. A “collapsed core” is when the distribution layer and core layer functions are implemented by a single device. The primary motivation for the collapsed core design is reducing network cost, while maintaining most of the benefits of the three-tier hierarchical model.

Spine-leaf Architectures

Leaf-spine is a two-layer network topology composed of leaf switches and spine switches. Leaf switches mesh into the spine, forming the access layer that delivers network connection points for servers. Every leaf switch in a leaf-spine architecture connects to every switch in the network fabric.

With Leaf-Spine configurations, all devices are exactly the same number of segments away and contain a predictable and consistent amount of delay or latency for traveling information. This is possible because of the new topology design that has only two layers, the Leaf layer and Spine layer. The Leaf layer consists of access switches that connect to devices like servers, firewalls, load balancers, and edge routers. The Spine layer (made up of switches that perform routing) is the backbone of the network, where every Leaf switch is interconnected with each and every Spine switch.

To allow for the predictable distance between devices in this two-layered design, dynamic Layer 3 routing is used to interconnect the layers. Dynamic routing allows the best path to be determined and adjusted based on responses to network change. This type of network is for data center architectures with a focus on “East-West” network traffic. “East-West” traffic contains data designed to travel inside the data center itself and not outside to a different site or network. This new approach is a solution to the intrinsic limitations of Spanning Tree with the ability to utilize other networking protocols and methodologies to achieve a dynamic network.

WAN

A wide area network (also known as WAN), is a large network of information that is not tied to a single location. WANs can facilitate communication, the sharing of information and much more between devices from around the world through a WAN provider. WANs can be vital for international businesses, but they are also essential for everyday use, as the internet is considered the largest WAN in the world. Keep reading for more information on WANs, their use, how they differ from other networks and their overall purpose for businesses and people, alike.

Wide area networks are a form of telecommunication networks that can connect devices from multiple locations and across the globe. WANs are the largest and most expansive forms of computer networks available to date. These networks are often established by service providers that then lease their WAN to businesses, schools, governments or the public. These customers can use the network to relay and store data or communicate with other users, no matter their location, as long as they have access to the established WAN. Access can be granted via different links, such as virtual private networks (VPNs) or lines, wireless networks, cellular networks or internet access.

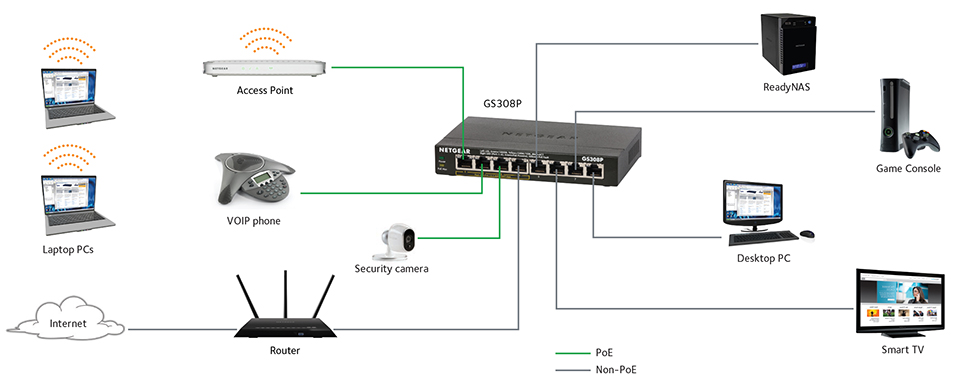

Small office/home office (SOHO)

The small office/home office (SOHO) is one of the fastest-growing segments of the networking market. A small office is one that has 2 to 50 users, while a home office typically has 2 to 5 users.2 In addition to computers for one or more students, there might be one for a telecommuter, along with a docking station for a mobile professional’s laptop computer. All of these computers can be networked together as peers to save money on printers and other resources, as well as the cost of Internet access.

The most popular network topology for the SOHO environment is 10/100BaseT Ethernet because it is relatively inexpensive and easy to set up and use. The same components used to build large enterprise networks are used to build the SOHO network. These components include cabling, media converters, network adapters, hubs, and network operating system. To access external networks a modem or router will be needed as well, depending on the type of connection desired.

All three types of media—twisted pair, thin coax, and optical fiber—can be used exclusively or together, depending on the type of network. Media converters are available that allow segments using different media to be linked together. Because media conversion is a physical layer process, it does not introduce significant delay on the network.

In a SOHO environment where just a few computers are networked together, users can get by with a hub, some network adapters, and 10BaseT cables. If significant growth is anticipated in the future, a stackable 10/100 Ethernet hub is the better choice. It provides tighter integration and maximum throughput, and the means to scale up to virtually any number of nodes if necessary without external repeaters or switches.

On-premises and cloud

It’s no surprise that cloud computing has grown in popularity as much as it has, as its allure and promise offer newfound flexibility for enterprises, everything from saving time and money to improving agility and scalability. On the other hand, on-premise software – installed on a company’s own servers and behind its firewall – was the only offering for organizations for a long time and may continue to adequately serve your business needs. Additionally, on-premise applications are reliable, secure, and allow enterprises to maintain a level of control that the cloud often cannot. But there’s agreement among IT decision-makers that in addition to their on-premise and legacy systems, they’ll need to leverage new cloud and SaaS applications to achieve their business goals.

CLOUD COMPUTING SERVICES

Cloud computing differs from on-premises software in one critical way. A company hosts everything in-house in an on-premise environment, while in a cloud environment, a third-party provider hosts all that for you. This allows companies to pay on an as-needed basis and effectively scale up or down depending on overall usage, user requirements, and the growth of a company.

A cloud-based server utilizes virtual technology to host a company’s applications offsite. There are no capital expenses, data can be backed up regularly, and companies only have to pay for the resources they use. For those organizations that plan aggressive expansion on a global basis, the cloud has even greater appeal because it allows you to connect with customers, partners, and other businesses anywhere with minimal effort.

Cloud computing architecture refers to the components and subcomponents required for cloud computing. These components typically consist of a front end platform (fat client, thin client, mobile device), back end platforms (servers, storage), a cloud based delivery, and a network (Internet, Intranet, Intercloud). Combined, these components make up cloud computing architecture.

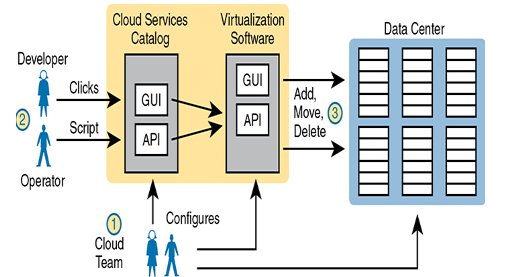

Private Cloud (On-Premise)

Private cloud creates a service, inside a company, to internal customers, that meets the five criteria from the NIST list. To create a private cloud, an enterprise often expands its IT tools (like virtualization tools), changes internal workflow processes, adds additional tools, and so on.

As some examples, consider what happens when an application developer at a company needs VMs to use when developing an application. With private cloud, the developer can request those VMs and those VMs automatically start and are available within minutes, with most of the time lag being the time to boot the VMs. If the developer wants many more VMs, he can assume that the private cloud will have enough capacity, and new requests are still serviced rapidly. And all parties should know that the IT group can measure the usage of the services for internal billing.

Focus on the self-service aspect of cloud for a moment. To make that happen, many cloud computing services use a cloud services catalog. That catalog exists for the user as a web application that lists anything that can be requested via the company’s cloud infrastructure. Before using a private cloud, developers and operators who needed new services (like new VMs) sent a change request asking the virtualization team to add VMs. With private cloud, the (internal) consumers of IT services—developers, operators, and the like—can click to choose from the cloud services catalog. And if the request is for a new set of VMs, the VMs appear and are ready for use in minutes, without human interaction for that step.

To make this process work, the cloud team has to add some tools and processes to its virtualized data center. For instance, it installs software to create the cloud services catalog, both with a user interface and with code that interfaces to the APIs of the virtualization systems. That services catalog software can react to consumer requests, using APIs into the virtualization software, to add, move, and create VMs, for instance. Also, the cloud team—composed of server, virtualization, network, and storage engineers—focuses on building the resource pool, testing and adding new services to the catalog, handling exceptions, and watching the reports (per the measured service requirement) to know when to add capacity to keep the resource pool ready to handle all requests.

1 Comment

Good books